Data Integration Services

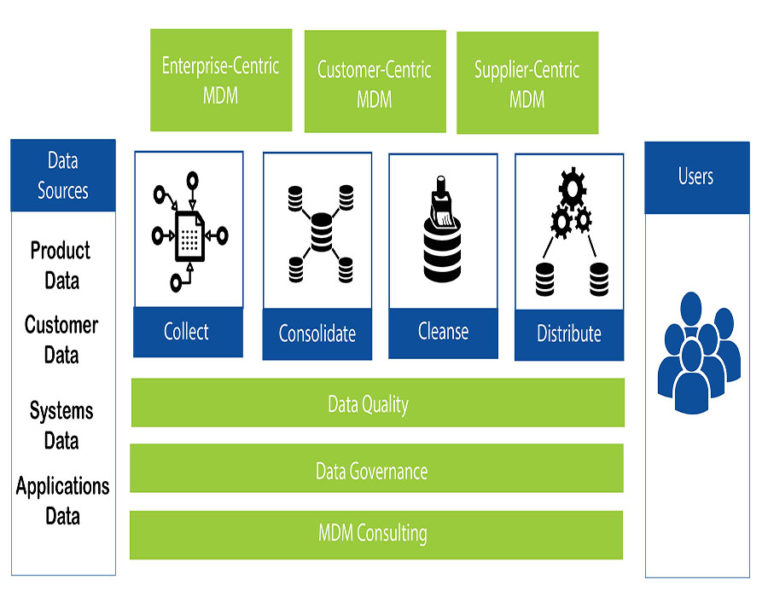

Data integration tools and solutions combine data from multiple sources across an organization to support an accurate, end-to-end and up-to-date data pipeline. Master data management (MDM) is a technology-enabled discipline in which business and IT work together to ensure the uniformity, accuracy, stewardship, semantic consistency and accountability of the enterprise’s official shared master data assets

The data ingestion process involves moving data from a variety of sources to a storage location such as a data warehouse or data lake. Ingestion can be streamed in real time or in batches and typically includes cleaning and standardizing the data to be ready for a data analytics tool. Examples of data ingestion include migrating your data to the cloud or building a data warehouse, data lake or data lakehouse.

Integrate data info of all major platforms

To circumvent data inconsistencies you need to identify a single version of truth in your data. The unified source of data will allow you to ask questions and have confidence in the answers your queries provide. Through Master Data Management (MDM) you can have a single view of customers, products, suppliers, inventory, employees and any other variables that are important to you.

Our process includes:

- Understanding the goals of your business and how an MDM strategy can fulfill them

- Identifying the critical master data

- Determining how data is organized and stored

- Identifying owners of data

- Evaluating whether a single domain or multi-domain is best

- Determining whether the Master Data Management strategy implementation is operation, enterprise, or analytics

- Developing the master data model

- Identifying every task that needs to have gone through a well-developed governance program

- Ensuring quality control

This solution offers the convenience of adding new sources by simply constructing an adapter or an application software blade for them. It contrasts with ETL systems or with a single database solution, which require manual integration of entire new data set into the system. The virtual ETL solutions leverage virtual mediated schema to implement data harmonization; whereby the data are copied from the designated “master” source to the defined targets, field by field. Advanced data virtualization is also built on the concept of object-oriented modeling in order to construct virtual mediated schema or virtual metadata repository, using hub and spoke architecture.

Each data source is disparate and as such is not designed to support reliable joins between data sources. Therefore, data virtualization as well as data federation depends upon accidental data commonality to support combining data and information from disparate data sets. Because of the lack of data value commonality across data sources, the return set may be inaccurate, incomplete, and impossible to validate.

One solution is to recast disparate databases to integrate these databases without the need for ETL. The recast databases support commonality constraints where referential integrity may be enforced between databases. The recast databases provide designed data access paths with data value commonality across databases.

We have been increasing the productivity and efficiency!

Be a partner with Goli Technologies to obtain time-relevant, mission-critical support in the areas of application integration, data management and integration, cloud application management and analytics solutions.

Integrations

Combined Experience

Expert Team

Happy Clients

Other Services

Generative AI

ERP

Mobile & Web App Development

Get high performance and scalable mobile and web app development services for your business.

Cloud Integration

We help migrate existing or deploy new application either fully in the cloud or on-premesis hybrid with AWS and or Azure..

IT Staff augmentation Services

Hire skilled IT resources on-demand to grow your existing team or add a particular technical expertise to boost your project..

Get In Touch

Enough about us, Let's talk about you and your Project !

Drop us a line about your project via the contact form below, and we will contact you within a day. All submitted information will be kept confidential.